LLM Security Best Practices

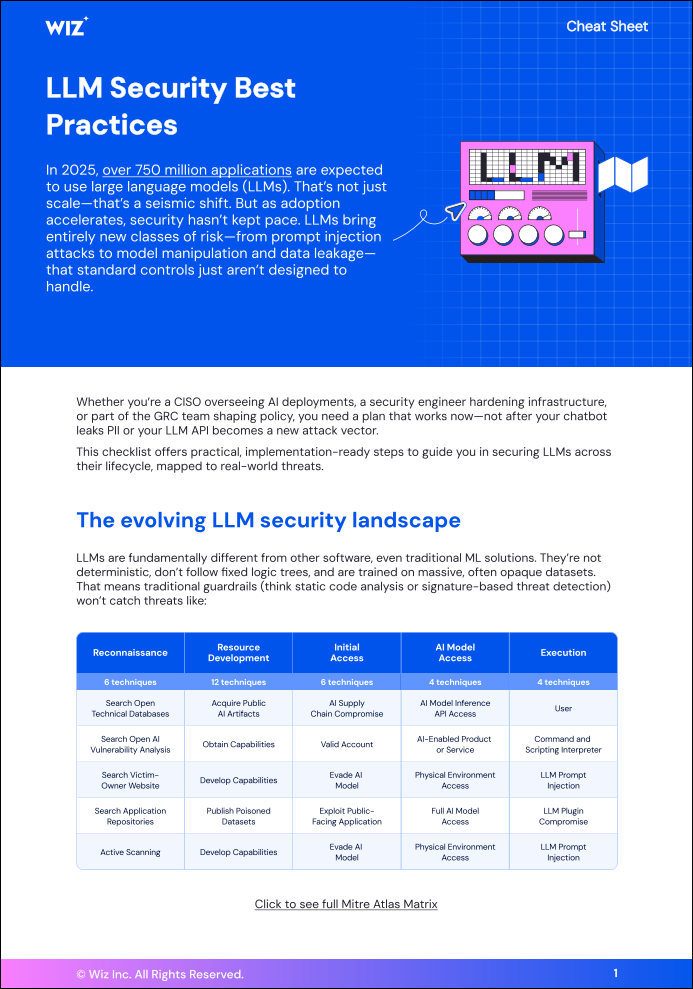

In 2025, over 750 million applications are expected to use large language models (LLMs). That’s not just scale—that’s a seismic shift. But as adoption accelerates, security hasn’t kept pace. LLMs bring entirely new classes of risk—from prompt injection attacks to model manipulation and data leakage— that standard controls just aren’t designed to handle.

This checklist offers practical, implementation-ready steps to guide you in securing LLMs across their lifecycle, mapped to real-world threats.